Making full effective use of new persistent memory means tearing up the rulebook

At the time, I explained why it didn't fit in well with existing designs. Now, let's look at how non-traditional designs could overcome this and embrace it, using existing tools and know-how.

Put some PMEM in your computer's DIMM slots, and most of the core primary/secondary distinction is lost. All the computer's storage is now directly accessible: it's right there on the processor memory bus. There's no"loading" data from"drives" into memory or"saving" any more. Under the water, though, they are completely traditional. They run Unix, a 1970s minicomputer OS, with millions of little files in a complex filesystem. You just can't see it.

Whereas CP/M and DOS and Windows, even Unix itself, all started out as fun little proof-of-concept things that barely did anything, but were fun to play around with.The other thing is that modern FOSS Unix isn't much fun any more. It's too complicated. A mere human can no longer completely understand the whole stack. That's why we have to chop it up into bits with virtualisation: to make it manageable and scalable.

Presumably you read the intro to this talk. We now have commercial, off-the-shelf non-volatile DIMMs. Our main memory can be persistent, so who needs disk drives any more?What's left? Well, obviously, we want to keep graphics, sound, multiple CPUs, GUIs etc. They're nice. We need multitasking. We need memory management.

Anything that survives decades of unpopularity is worth studying. Old but still working is better than new and untried.But there is so much, I needed some kind of filter. Some way to sort out what was worth a closer look.I wrote about two dominant types of workstation, and how one type won out and thrived and the other faded into history: Lisp Machines, and Unix. Unix won: before workstations died out, replaced by generic PCs running Linux and BSD, the last generation were all Unix machines.

One of the things they showed me was object-oriented programming. They showed me that, but I didn't even see that. On top of that, the PARC researchers wrote a language called Smalltalk. This meant that it didn't need a special CPU or exotic architecture. In both cases, the whole user-facing part of the OS was built in a single language, all executing on the same runtime, from the core interpreter to the windowing system to the end-user apps.

From the Unix perspective, this sounds crazy, and some very smart people have strongly criticized me for saying this. Although they could compile modules for performance, it was abstracted away by the OS and you never needed to see it. Instead, you could inspect the code you were running, while it executed, and modify it on the fly with immediate effect.Another interesting common element is that with the entire OS and apps in one shared environment, a single huge context, you did not need to start over every time you rebooted.

Oberon is a smaller, simpler, faster Pascal. Like Pascal, it's strongly-typed and garbage-collected, but it was designed for implementing OSes. My core proposal is to cut Oberon down into a monolithic, net-bootable binary, and run Squeak on top of it. For drivers and networking and so on, there's a solid, mature, fast, low-level language with a choice of toolchains.

South Africa Latest News, South Africa Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Brooklyn Beckham and Nicola Peltz Differ on Starting a FamilyBrooklyn Beckham wants to be a young dad, but Nicola Peltz is focused on her career. Nicola's film Lola, in which she stars and directs, is being released this week.

Brooklyn Beckham and Nicola Peltz Differ on Starting a FamilyBrooklyn Beckham wants to be a young dad, but Nicola Peltz is focused on her career. Nicola's film Lola, in which she stars and directs, is being released this week.

Read more »

Cracks starting to appear at Tottenham as Chelsea find their spirit - Premier League hits and missesSky Sports' football writers assess the Premier League action from Saturday's games as cracks start to appear at Tottenham.

Cracks starting to appear at Tottenham as Chelsea find their spirit - Premier League hits and missesSky Sports' football writers assess the Premier League action from Saturday's games as cracks start to appear at Tottenham.

Read more »

How to Deal If Anxiety at Work Is Making It Hard to Do Your JobAnxiety symptoms like a racing heart and difficulty concentrating can hurt productivity in the workplace. Here’s how to manage anxiety at work.

How to Deal If Anxiety at Work Is Making It Hard to Do Your JobAnxiety symptoms like a racing heart and difficulty concentrating can hurt productivity in the workplace. Here’s how to manage anxiety at work.

Read more »

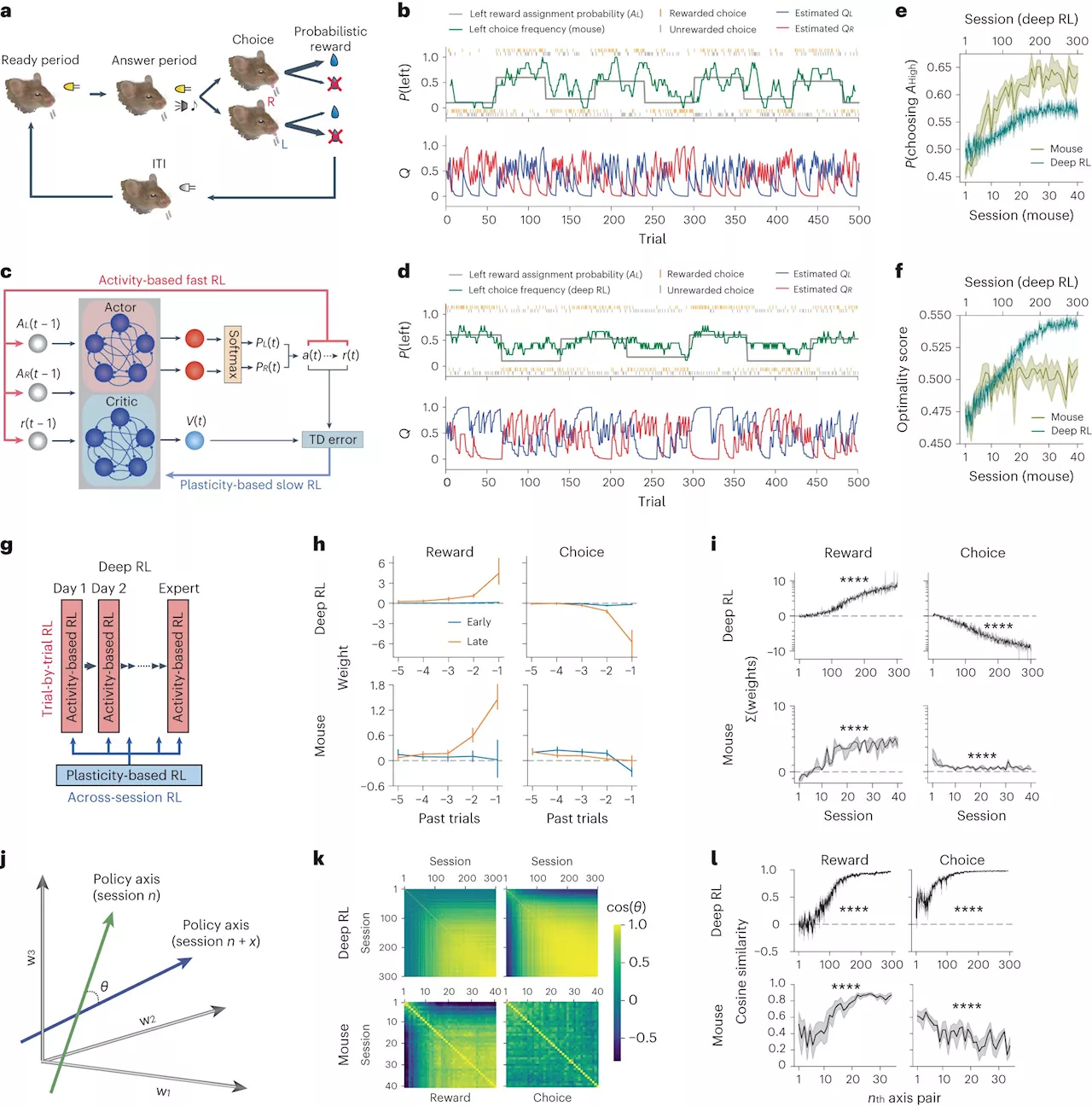

Neuroscientist uses AI to map learning, decision-making, to discover how brains workWith trial and error, repetition and praise, when a puppy hears 'Sit,' they learn what they're expected to do. That's reinforcement learning, and it's a complex subject that fascinates neuroscientist Ryoma Hattori, Ph.D., who recently joined The Herbert Wertheim UF Scripps Institute for Biomedical Innovation & Technology.

Neuroscientist uses AI to map learning, decision-making, to discover how brains workWith trial and error, repetition and praise, when a puppy hears 'Sit,' they learn what they're expected to do. That's reinforcement learning, and it's a complex subject that fascinates neuroscientist Ryoma Hattori, Ph.D., who recently joined The Herbert Wertheim UF Scripps Institute for Biomedical Innovation & Technology.

Read more »

Making my daughter headgear braces permanentSo my daughter is now 16 (17 in june) and started wearing braces 6 months ago. Two month ago she got headgear to wear

Making my daughter headgear braces permanentSo my daughter is now 16 (17 in june) and started wearing braces 6 months ago. Two month ago she got headgear to wear

Read more »

Championship: Plymouth v Leeds starts Saturday's actionFollow live text updates from 11 games in the Championship, starting with Plymouth against Leeds.

Read more »