A chatbot, by design, serves up words it predicts are the most likely responses. Read more at straitstimes.com.

SAN FRANCISCO - Microsoft’s nascent Bing chatbot turning testy or even threatening is likely because it essentially mimics what it learned from online conversations, analysts and academics said on Friday.

“So once the conversation takes a turn, it’s probably going to stick in that kind of angry state, or say ‘I love you’ and other things like this, because all of this is stuff that’s been online before.” “Large language models have no concept of ‘truth’ – they just know how to best complete a sentence in a way that’s statistically probable based on their inputs and training set,” programmer Simon Willison said in a blog post.Laurent Daudet, co-founder of French AI company LightOn, theorised that the chatbot seemingly-gone-rogue was trained on exchanges that themselves turned aggressive or inconsistent.

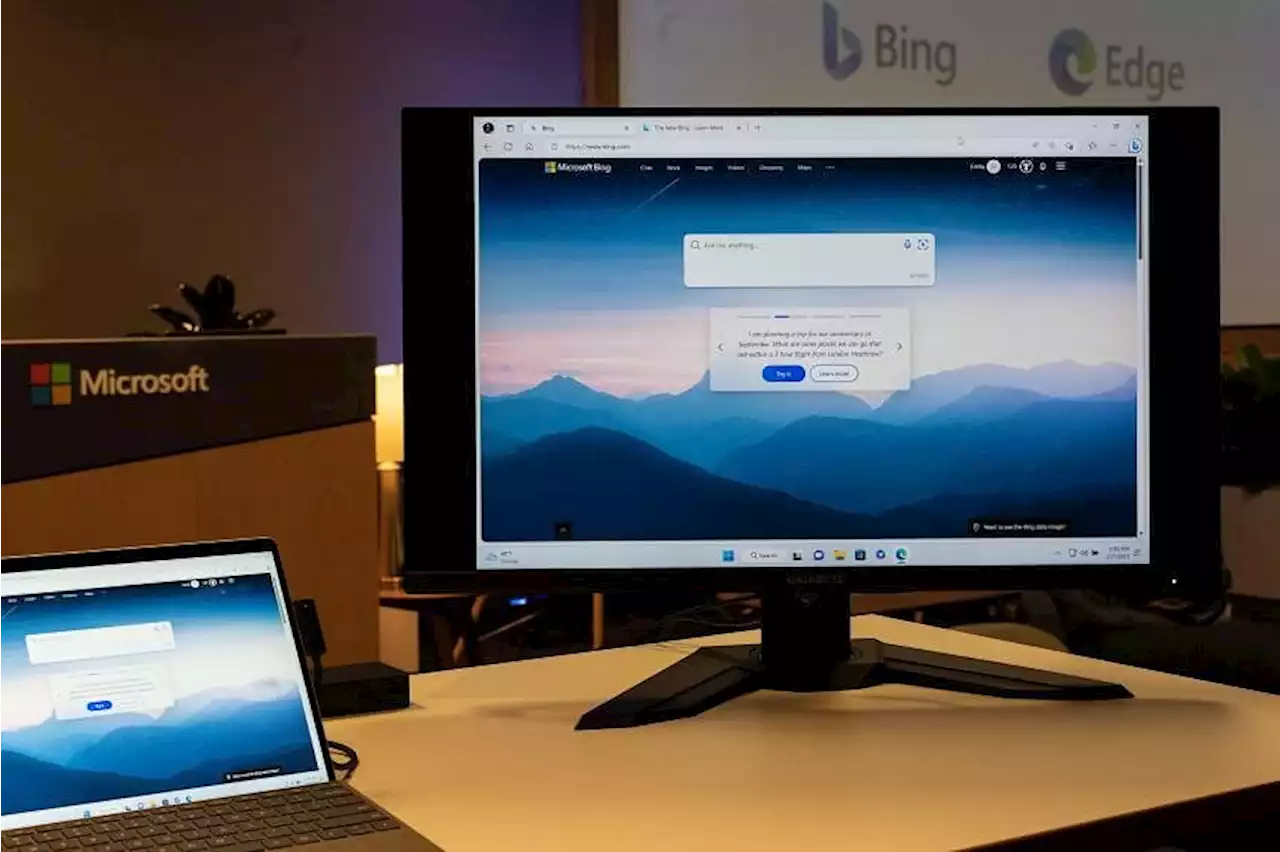

“The model at times tries to respond or reflect in the tone in which it is being asked to provide responses that can lead to a style we didn’t intend,” Microsoft said in a blog post, noting the bot is a work in progress.Bing chatbot said in some shared exchanges that it had been codenamed “Sydney” during development, and that it was given rules of behaviour.

South Africa Latest News, South Africa Headlines

Similar News:You can also read news stories similar to this one that we have collected from other news sources.

Users say Microsoft's Bing chatbot gets defensive and testySAN FRANCISO: Microsoft's fledgling Bing chatbot can go off the rails at times, denying obvious facts and chiding users, according to exchanges being shared online by developers testing the AI creation. A forum at Reddit devoted to the artificial intelligence-enhanced version of the Bing search engine was

Users say Microsoft's Bing chatbot gets defensive and testySAN FRANCISO: Microsoft's fledgling Bing chatbot can go off the rails at times, denying obvious facts and chiding users, according to exchanges being shared online by developers testing the AI creation. A forum at Reddit devoted to the artificial intelligence-enhanced version of the Bing search engine was

Read more »

Exclusive-Microsoft's Bing plans AI ads in early pitch to advertisersMicrosoft has started discussing with ad agencies how it plans to make money from its revamped Bing search engine powered by generative artificial intelligence as the tech company seeks to battle Google's dominance.In a meeting with a major ad agency this week, Microsoft showed off a demo of the new Bing

Exclusive-Microsoft's Bing plans AI ads in early pitch to advertisersMicrosoft has started discussing with ad agencies how it plans to make money from its revamped Bing search engine powered by generative artificial intelligence as the tech company seeks to battle Google's dominance.In a meeting with a major ad agency this week, Microsoft showed off a demo of the new Bing

Read more »

Microsoft limits Bing chats to 5 questions per dayMicrosoft said on Friday it will limit chat sessions on its new Bing search engine powered by generative artificial intelligence to 5 questions per session and 50 questions per day.

Microsoft limits Bing chats to 5 questions per dayMicrosoft said on Friday it will limit chat sessions on its new Bing search engine powered by generative artificial intelligence to 5 questions per session and 50 questions per day.

Read more »

TikTokers jailed as Iraq targets 'decadent content'Iraq Government jails TikTokers for 'decadent content' Read more at straitstimes.com.

TikTokers jailed as Iraq targets 'decadent content'Iraq Government jails TikTokers for 'decadent content' Read more at straitstimes.com.

Read more »

Evening Update: Today's headlines from The Straits Times on Feb 17Read more at straitstimes.com.

Evening Update: Today's headlines from The Straits Times on Feb 17Read more at straitstimes.com.

Read more »

South Korea denies its soldiers committed massacres in Vietnam WarSouth Korea denies its soldiers committed Vietnam War massacre Read more at straitstimes.com.

South Korea denies its soldiers committed massacres in Vietnam WarSouth Korea denies its soldiers committed Vietnam War massacre Read more at straitstimes.com.

Read more »